Meta Is Watching. Are You Ready For When Your Employees Ask If You Are Too?

Key Takeaways

- The Meta angle: Meta is installing keystroke/screenshot trackers on employee computers. Leadership teams everywhere are now asking whether they should do the same.

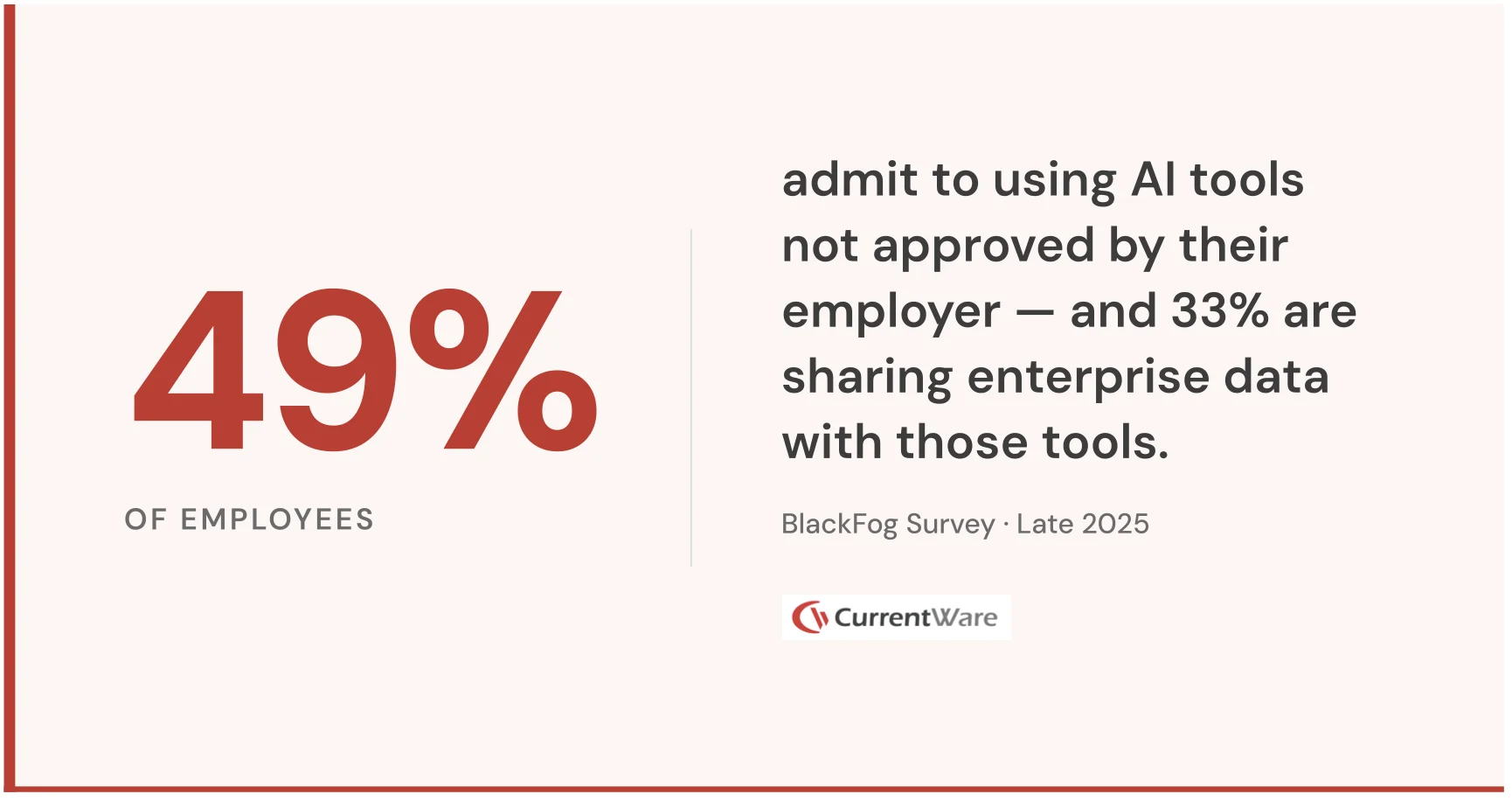

- The real story: Workforce monitoring in 2026 isn't just about productivity - it's being driven by insider risk ($19.5M average annual cost), shadow AI (49% of employees using unapproved tools), data leakage, and software waste ($19.8M in unused licenses).

- Where organizations fail: Treating it as a comms problem ("we promise not to misuse this") instead of a governance problem (building technical limits that make misuse impossible).

- The legal minefield: No single global policy works - EU, Germany, France, Quebec, and several US states all have distinct requirements.

- The bottom line: Companies that move now with clear policy + technical guardrails will set the internal narrative. Those that wait will react to a trust incident or regulatory inquiry instead.

Meta made headlines this week when Reuters reported the company is installing tracking software on U.S. employee computers, capturing mouse movements, clicks, keystrokes, and periodic screen snapshots as training data for its AI models. The program, called the Model Capability Initiative, sits inside Meta's broader push to build AI agents capable of performing knowledge work autonomously.

Meta was quick to add a qualifier: the data will not be used for performance evaluations.

That one sentence reveals everything about where enterprise workforce monitoring is headed in 2026, and why so many organizations will get it wrong.

The Question Coming to Every Leadership Team

Meta's announcement didn't happen in a vacuum. It landed in the middle of a broader cultural moment: mass layoffs, aggressive headcount cuts, and very public commentary from investors about using AI to identify who's actually contributing and who isn't. Employees aren't reading this as a neutral AI research update. They're reading it through everything else happening around them.

This is the dynamic that will define workforce monitoring conversations inside organizations for the next 12 months. The technology is mature. The business case is clear. But the employee trust question, what are you collecting, why, and what will you actually do with it, is where most programs will succeed or fail.

Organizations that treat this as a communications problem will get it wrong. It's a governance problem. When monitoring infrastructure exists, employees will assume it will be used for the broadest possible purpose, regardless of what the launch memo says. The answer isn't better messaging. It's building a system where stated limits are structurally enforceable.

Why This Trend Is Accelerating Now

Workforce monitoring isn't new. What's new is the combination of forces making it urgent.

Remote and hybrid work permanently changed the visibility equation. Microsoft's Work Trend Index found that 85% of leaders say the shift to hybrid work has made it challenging to have confidence that employees are being productive, yet Gallup's February 2025 tracking shows 52% of the U.S. remote-capable workforce now works hybrid. That gap between leadership confidence and workforce reality is where monitoring demand comes from.

At the same time, AI has changed what's possible with activity data. Raw logs were always available. What's new is AI's ability to turn that data into insight at scale, identifying who does focused work versus who fills meeting time, where collaboration is genuine, where output is concentrated in a few people. This is the shift from monitoring as a compliance tool to monitoring as a management intelligence layer.

And then there's the AI training angle Meta is pursuing. The most valuable training data for the next generation of enterprise AI isn't scraped from the internet. It's the behavioral record of how skilled knowledge workers actually do their jobs. The race to capture that data, responsibly or otherwise, is just beginning.

What Organizations Are Actually Using This For

Adoption in 2026 is being driven by a broader set of use cases than most people realize.

Productivity and focus time. Understanding how work time is actually distributed across meetings, tools, and execution. Organizations are using this data to identify where meeting load is destroying productive time, where workloads are unsustainable, and where process inefficiencies create hidden overhead.

Insider risk and data security. The Ponemon Institute's 2026 Cost of Insider Risks Global Report found that insider incidents now cost organizations an average of $19.5 million annually, driven primarily by negligence rather than malice. IBM's 2024 Cost of a Data Breach Report shows the average malicious insider breach costs $4.99 million and takes 292 days to identify and contain. Behavioral monitoring is the primary tool for closing that detection gap.

Shadow AI and unauthorized tool visibility. This is the fastest-moving use case right now. A BlackFog survey conducted in late 2025 found that 49% of employees admit to using AI tools not approved by their employer, and 33% are sharing enterprise research or datasets with those tools. IBM's 2025 Cost of a Data Breach Report found that shadow AI incidents cost organizations $670,000 more than standard breaches on average. Most organizations can't see this happening. Workforce monitoring changes that.

Data loss prevention. As employees paste sensitive content into public AI interfaces, upload files to personal accounts, or transfer data to external platforms, behavioral monitoring is increasingly the first line of detection, identifying anomalies before they become incidents.

Software license optimization. Zylo's 2026 SaaS Management Index found that organizations waste an average of $19.8 million annually on unused software licenses, with roughly half of all provisioned licenses going unused in a given month. Application usage data gives IT and finance teams the visibility to reclaim that spend, and in many organizations, this alone justifies the investment.

Workforce optimization. As pressure to run leaner increases, behavioral data provides an evidentiary foundation for decisions about contribution and headcount that self-reported performance reviews cannot. High performers carrying disproportionate output become visible. So does the inverse.

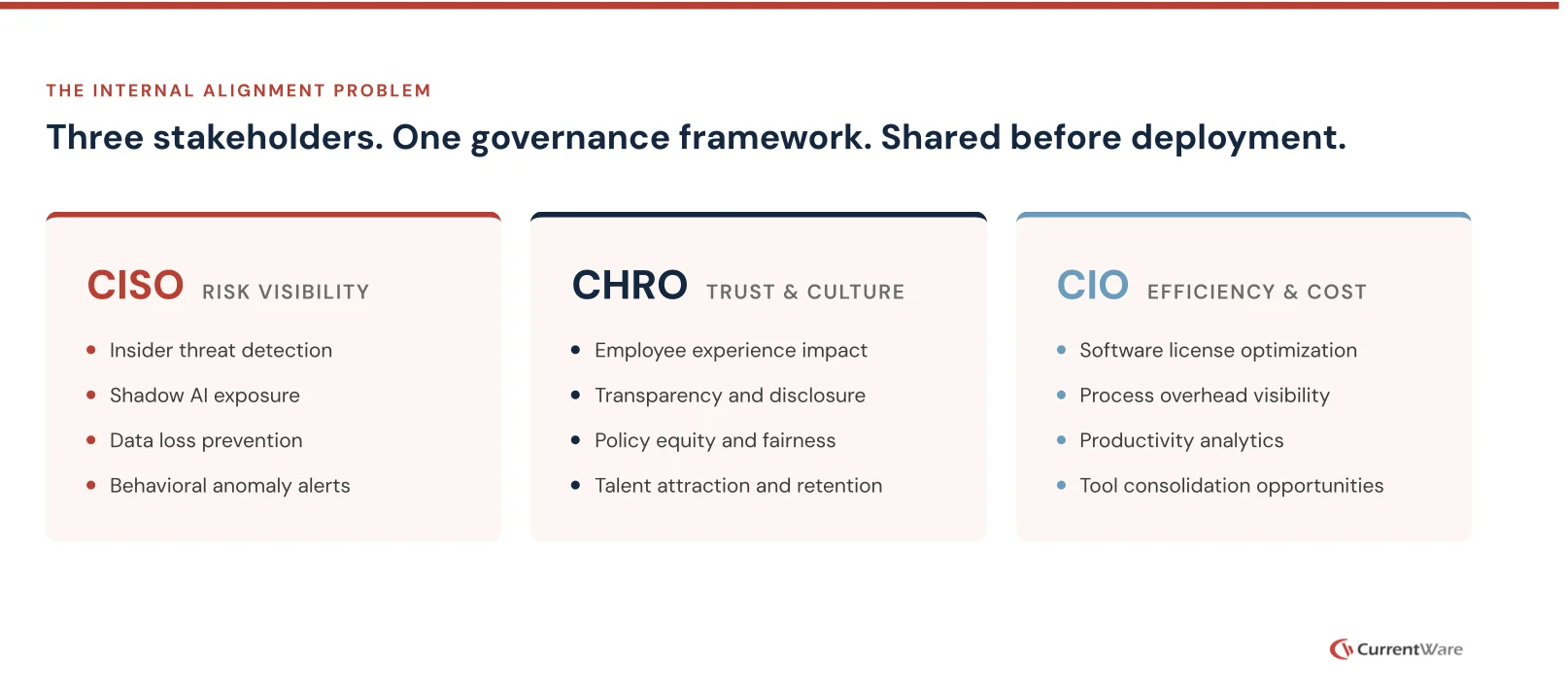

The Internal Alignment Problem

One reason monitoring programs fail isn't the technology. It's internal misalignment. CISOs want risk visibility. CHROs worry about trust and culture. CIOs want system-wide efficiency and cost control. Organizations that align these three stakeholders on a shared governance framework before deployment build durable programs. Those that don't end up with fragmented rollouts, policy gaps, and the worst of all outcomes: monitoring infrastructure that creates liability without delivering insight.

The Legal Landscape Is More Fragmented Than Most Realize

Reuters noted a detail that deserves more attention: this type of monitoring is illegal in some countries, including Italy.

For any organization operating across multiple markets, that's not a footnote. The EU requires monitoring to be proportionate, clearly disclosed, and limited to what's necessary for a legitimate purpose. Germany gives employee representatives significant influence over monitoring programs before they go live. France requires consultation. Canada's Quebec privacy legislation creates distinct obligations that go beyond federal requirements. Several U.S. states, including Connecticut, New York, and Delaware, require explicit employee notice before electronic monitoring begins.

There is no single policy that works everywhere. Organizations that design a U.S.-centric monitoring program and roll it out globally without jurisdiction-specific review are creating legal exposure their teams may not have fully mapped.

What Getting This Right Actually Looks Like

The organizations navigating this well treat policy design as seriously as technology selection. The platform is the easier decision. The governance framework is what determines whether the program holds up.

Scope limitations need to be technical, not just documented. If monitoring data won't be used for performance evaluations, that constraint should be built into the system, through access controls that prevent misuse, not just policy language that depends on goodwill. Jurisdiction-aware data handling matters too: what's collected in Germany should be governed by German rules, and a platform that can't enforce that at the data layer is a compliance liability regardless of how good the documentation looks.

Employees should have visibility into what's collected about them, how it's categorized and how long it's retained. It feels counterintuitive, but transparency consistently reduces the fear of hidden use more than any communications effort. Audit trails on data access, and defined retention windows with automated deletion, complete the picture: they make accountability enforceable and signal that the program has real limits.

The Talent Dimension

Candidates in knowledge work roles are starting to ask about monitoring policies during hiring. The organizations most likely to implement monitoring carelessly, maximum surveillance with minimum transparency, are also the ones most likely to drive away the high performers that workforce analytics is supposed to surface.

Organizations that build monitoring programs with the same rigor they bring to customer data privacy will find the opposite dynamic. Clarity, visible limits, and genuine respect for employee experience are not constraints on the value of workforce intelligence. They are what makes it sustainable.

The Window to Get Ahead of This

Meta's story has put workforce monitoring on every leadership team's agenda. HR leaders, legal counsel, CISOs, and employees are all forming views about it right now, before most organizations have made deliberate decisions.

Organizations that move now with a clear, policy-grounded approach will shape the internal conversation rather than react to it. The alternative, waiting for a trust incident, a regulatory inquiry, or a talent signal to force the question, is the more expensive path.

Meta said its keystroke data won't be used for performance evaluations. The real question is whether they've built a system where that commitment is actually enforceable, and visible enough that employees have reason to believe it.

That's the standard. And it's reachable, for organizations that design it from the start.

CurrentWare enables organizations to operationalize workforce intelligence, with enforceable governance across productivity, AI usage, insider risk, and software spend. Built for leaders who need more than visibility. They need control. Learn more at currentware.com.